Reformulating a Problem#

As explained in the Getting Started with D-Wave Solvers guide, the first step of solving your problem is to state it; for example:

How do I assign a color to each region of a map such that any two regions sharing a border have different colors? (This is the well-known Map Coloring problem.)

How do I find values of the binary variables that satisfy the satisfiability (SAT) problem,

\[(x_1 \vee \overline{x}_2 ) \wedge (\overline{x}_1 \vee x_2)\]How do I place routers in a communications network for maximum coverage of all network nodes? (This can be posed as the vertex cover problem as done in the vertex cover example.)

The next step is to map the problem to an objective function, usually in Ising or QUBO formats[1] if you are submitting to a quantum sampler, known as the quantum computer’s native formulations. Low-energy states of the objective function represent good solutions to the problem.

This chapter presents some general guidance, the following reformulation techniques[2], and some extended reformulation examples:

Some of these techniques are intended for use with quantum samplers and the hybrid solvers in the Leap service that accept binary quadratic models; for example, if you are using a constrained quadratic model (CQM) solver, you can state constraints in a straightforward manner.

The sections also provide references to examples and literature to guide understanding and implementation.

Note

Some sections describe the techniques in formal mathematical formulation, which might be abstract, but those typically also provide simple examples for users looking for practical guidance.

General Guidance on Reformulating#

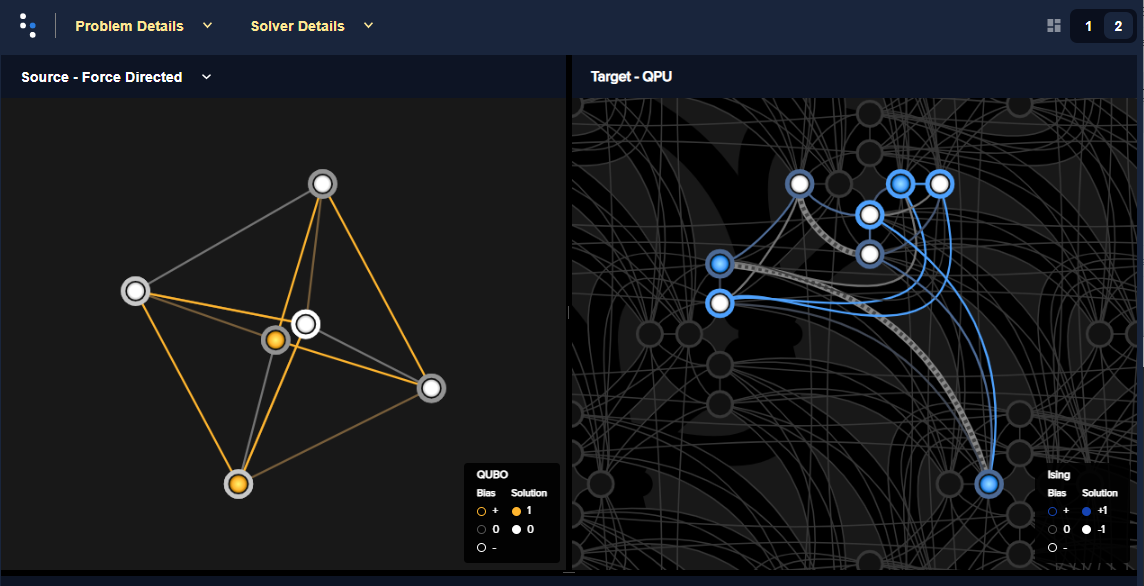

While the Leap service’s hybrid solvers can accept arbitrarily structured BQMs of huge size, problems submitted directly to the quantum computer must be formulated to be compatible with the constraints of the physical system and as robust as possible to its control errors[3].

The QPU Solver Datasheet guide provides details on the physical system and its integrated control errors (ICE).

Reformulation Steps#

To map a problem to a quadratic or nonlinear model, you can try the following steps.

Write out the objective and constraints.

This should be written out in terms of your problem domain, not necessarily in math format.

The objective of a problem is what you are looking to minimize[4].

A constraint in a problem is a rule that you must follow; an answer that does not satisfy a given constraint is called infeasible, it’s not a good answer, and might not be usable at all. For example, a travelling salesperson cannot be in two cities at once or the trucks in your problem may not be able to hold more than 100 widgets.

There may be more than one way to interpret your problem in terms of objectives and constraints.

Convert objective and constraints into math expressions.

Decide on the type of variables to best formulate it:

Binary

Does your application optimize over decisions that could either be true (or yes) or false (no)? For example,

Should the antenna transmit or no?

Did a network node experience failure?

Discrete

Does your application optimize over several distinct options? For example,

Which shift should employee X work?

Should the state be colored red, blue, green or yellow?

Integer

Does your application optimize the number of something? For example,

How many widgets should be loaded onto the truck?

Continuous

Does your application optimize over an uncountable set? For example,

Where should the sensor be built?

Once you think of a problem in these terms, you can assign a variable for each question.

Next ask about the degree the problem can likely be formulated as:

Quadratic

Are its relationships defined pairwise? For example,

In the structural imbalance example each pair of people in the network is either friendly or hostile.

Higher-Order

Does your application have relationships between multiple variables at once? For example,

Simulating an AND gate

Next, transform objective and constraints into math expressions. For binary variables, this can often be done with truth tables if you can break the problem down into two- or three-variable relationships. For other variables and non-quadratic degrees, you can try techniques such as Non-Quadratic (Higher-Degree) Polynomials and Ocean tools such as Higher-Order Models.

Reformulate as a model.

Different types of expressions require different strategies. Expressions derived from truth tables may not need any adjustments. The D-Wave Problem-Solving Handbook provides a variety of reformulation techniques; some common reformulations are:

Squared terms: QUBO and Ising models do not have squared binary variables. In QUBO format, its 0 and 1 values remain unchanged when squared, so you can replace any term \(x_i^2\) with \(x_i\). In Ising format, its -1 and +1 values always equal 1 when squared, so you can replace any term \(s_i^2\) with the constant 1.

Maximization to minimization: if your objective function is a maximization function (for example, maximizing profit), you can convert this to a minimization by multiplying the entire expression by -1. For example:

\[\mbox{arg max} (3v_1+2v_1v_2) = \mbox{arg min} (-3v_1-2v_1v_2)\]Equality to minimization: if you have a constraint that is an equality, you can convert it to a minimization expression by moving all arguments and constants to one side of the equality and squaring the non-zero side of the equation. This leaves an expression that is satisfied at its smallest value (0) and unsatisfied at any larger value (>0). For example, the equation \(x+1=2\) can be converted to \(\min_x[2-(x+1)]^2\), which has a minimum value of zero for the solution of the equation, \(x=1\).

This approach is useful also for \(n \choose k\) constraints (selection of exactly \(k\) of \(n\) variables), where you disfavor selections of greater or fewer than \(k\) variables with the penalty \(P = \alpha (\sum_{i=1}^n x_i - k)^2\), where \(\alpha\) is a weighting coefficient (see penalty method) used to set the penalty’s strength.

Combine expressions.[5]

Once you have written all of the components (objective and constraints) as models, for example BQMs, make a final model by adding all of the components. Typically you multiply each expression by a constant that weights the different constraints against each other to best reflect the requirements of your problem. You may need to tune these multipliers through experimentation to achieve good results. You can see examples in Ocean software’s collection of code examples on GitHub.

For maximization see step 3.

When using penalty models for constraints.

Reformulation Considerations#

You can frequently reformulate a problem in a variety of ways, some of which may be more performant than others. This section notes some general considerations and common practices for successful reformulation.

Compare Approaches#

Start with the simplest possible method you can find to formulate your problem in the supported format of your chosen solver (BQM, CQM, etc). If your formulation does not provide good solutions (with the solver properly configured), consider alternative formulations. A formulation that has the disadvantage of increasing the number of variables might compensate by being more amenable to minor-embedding; a different formulation might produce a smaller range of bias values.

Variable Proliferation#

Some reformulations may create very large numbers of variables; for example, integer-to-binary-variables conversion typically does. There may be alternative formulations that require fewer variables.

For example, although you may be able to derive a penalty by conversion to a linear equality or by conversion to a linear inequality, it may produce better solutions to apply the techniques of the non-linear constraints section to construct a penalty with fewer ancillary variables. [6]

Equality constraints, \(\sum_{i=0}^N a_ix_i == b\), standardly formulated as BQMs,

require about \(N^2/2\) biases. For 20 such constraints, you need about 200 biases; for 2000 constraints you already need about two million biases.

For a particular example, consider the constraint \(x_1\vee x_2\vee x_3\) (which is useful for solving a 3-SAT). You can express it as the inequality \(x_1+x_2+x_3\ge 1\) and convert it to the penalty function

The two ancillary variables \(a_1\) and \(a_2\) are necessary to represent the possible slack values 0, 1, and 2. However, the penalty function

represents the same constraint using a single ancillary variable.[7]

In fact, this representation is commonly used to reduce 3-SAT to MAX-2-SAT.

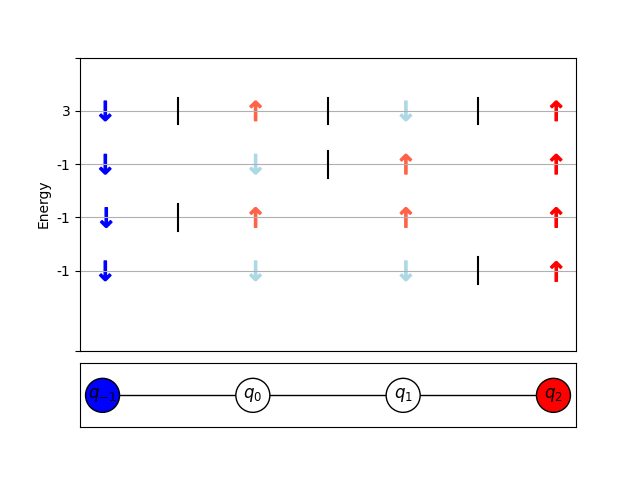

Section Energy Gap shows a small example of two formulations of the same penalty function with different numbers of ancillary variables.

Scale of Biases#

The scale of biases—the difference between the smallest and largest biases in your BQM—can affect performance. For QPU solvers, your BQM’s linear and quadratic coefficients translate in the end to qubit and coupler biases; if your problem results in, for example, a smallest bias of \(h_{12}=-0.032\) and a largest bias of \(h_{36}=3405\), when these biases are scaled down to the range of biases supported on the QPU, h_range, the small bias is effectively zero.

Pay attention to the scale of biases in your selected formulation—sometimes small formulation changes can produce much lower scales.

Section Problem Scale shows a code example.

Clearer Representations#

Some formulations might be more intuitive than others, and therefore easier to use and debug. For example, you can lower-bound a QUBO by rewriting without any negative coefficients, using negative literals instead: express contributions such as \(-3 x_1 x_2\) as either

The alternative expressions clearly show a lower bound of \(-3\).

Similarly, some formulations allow you to make the value of the energy meaningful. This can be useful in understanding the quality of solutions.

Further Information#

Algorithms Implemented in Ocean Software#

Some problem categories are common enough that you can find existing implementations of the reformulation.

Example: Graph Problems#

Ocean software provides algorithms for many known graph problems.

This example demonstrates a problem of finding the largest group of friends who

all know each other in a social setting. It states the problem as the graph

problem maximum clique.

Instead of working out the mathematical mapping to a BQM yourself, you can

use the dwave_networkx.clique_number() function.

>>> import networkx as nx

>>> import dwave_networkx as dnx

>>> import dimod

...

>>> # Create an illustration graph

>>> G = nx.caveman_graph(2, 4)

>>> dnx.algorithms.clique.clique_number(G, dimod.ExactSolver())

4

Example: Constraints#

Ocean software provides generic quadratic-model generators.

This example shows how you can use a

dimod BQM generator for a problem

that includes a constraint \(n \choose k\), which requires that exactly

\(k\) of \(n\) variables be selected. The

dimod.generators.combinations() function builds a BQM that is minimized

when exactly \(k\) of its variables equal 1.

>>> import dimod

...

>>> bqm_4_2 = dimod.generators.combinations(['x1', 'x2', 'x3', 'x4'], 2)

Adding the bqm_4_2 BQM to a problem BQM with variables

x1, x2, x3, x4, with a suitable weight (see

penalty method) makes it

probable that exactly two of the four variables are 1 in returned solutions.

>>> print(dimod.ExactSolver().sample(bqm_4_2).first)

Sample(sample={'x1': 1, 'x2': 1, 'x3': 0, 'x4': 0}, energy=0.0, num_occurrences=1)

Further Information#

See the Ocean software documentation for available BQM-generating functionality.

Native QPU Formulations: Ising and QUBO#

You may be able to formulate some problems directly as binary quadratic models, as shown in the examples of the Getting Started with D-Wave Solvers guide.

The D‑Wave QPU can be viewed as a heuristic that minimizes Ising objective functions using a physically realized version of quantum annealing.

In Ising formulation, given a problem of \(N\) variables corresponding to physical Ising spins, \(\vc s=[s_1,...,s_N]\), and a configuration of \(h_i\) local fields and \(J_{i,j}\) couplings between spins, the QPU (in its solution spin values) minimizes the objective function

It is straightforward to translate between Ising and QUBO representations. In QUBO formulation, \(N\) binary variables are represented as an upper-diagonal matrix \(\vc{Q}\), where diagonal terms are the linear coefficients and the nonzero off-diagonal terms are the quadratic coefficients. The objective function to minimize is

For many small problems—or large problems amenable to being broken into small parts—you can use truth tables to construct a BQM. For many others, the original mathematical formulation can simply be mapped to Ising or QUBO format. Others might enable formulation through known elements such as Boolean logic or simple penalty models.

Example: Truth-Table Formulation#

As shown in the Getting Started with D-Wave Solvers guide, you can formulate a QUBO in the following steps:

Tabulate all the problem’s possible states in a truth table.

Penalize undesirable states with higher energies.

Set QUBO coefficients that result in the selected energies for each state.

This example uses a truth table to formulate the NOT constraint, \(v_2 = \neg v_1\). A penalty (energy gap) of 1 is chosen.

Table 11 shows all four possible states of \(v_1, v_2\) in columns two and three, whether the state is feasible for constraint \(v_2 = \neg v_1\) in column four, and a relative penalty of 1 for the infeasible states in the rightmost column.

State |

\(v_1\) |

\(v_2\) |

\(v_2 = \overline{v_1}\) ? |

Penalty |

|---|---|---|---|---|

1 |

0 |

0 |

No |

p |

2 |

0 |

1 |

Yes |

p-1 |

3 |

1 |

0 |

Yes |

p-1 |

4 |

1 |

1 |

No |

p |

Substituting the values of the table’s variables for variables \(q_1, q_2\) in the two-variable QUBO model,

and the desired penalty for the resulting energy produces for the four rows of the table these four equalities:

Giving the following four equations with four variables:

Solving these equations by simple substitution gives \(E(q_1, q_2) = -q_1 - q_2 +2 q_1 q_2\), which is equivalent to the QUBO given in Table 17 of the Elementary Boolean Operations section, with an offset of 1 (this formulation has a ground-state energy of -1 versus the other formulation’s ground-state energy of 0).

Example: Formulation by Similarity#

Problems formulated as either a maximum independent set (MIS), which seeks the largest subset of vertices of graph \(G=(V,E)\) that have no common edge, or a maximum cut, which seeks a subset of vertices that maximizes the number of edges between the subset and remaining vertices, have a noticeable similarity to the Ising format.

In MIS formulation, the objective function is:

Clearly this formulation is similar to Ising with local fields \(h_i=-1\) and only positive coupling strengths \(J_{i,j}\) (represented by penalties \(M_{i,j} > 1\)).

You can formulate such problems as BQMs yourself but Ocean software’s dwave_networkx package provides functions for these graph problems and more.

Example: Formulation with Boolean Logic#

The technique of formulating problems with Boolean operations is very versatile: modern computing is built on Boolean gates, the scope of addressable problems is immense.

To express a constraint that variable \(z\) should be 1 if either or both of variables \(x1\) or \(x2\) are one, you can use an OR gate. Ocean software’s dimod package provides functionality for creating BQMs from Boolean logic:

>>> from dimod.generators import or_gate

>>> from dimod import ExactSolver

...

>>> # Create an OR constraint

>>> bqm_or = or_gate('x1', 'x2', 'z')

>>> # View best solutions

>>> print(ExactSolver().sample(bqm_or).lowest(atol=0.7))

x1 x2 z energy num_oc.

0 0 0 0 0.0 1

1 0 1 1 0.0 1

2 1 1 1 0.0 1

3 1 0 1 0.0 1

['BINARY', 4 rows, 4 samples, 3 variables]

The lowest-energy solutions are all feasible solutions for the constraint.

The factoring example expresses the constraints of a CSP with Boolean logic. The approach used in that example is to express \(P=ab\) as a CSP with a binary multiplication circuit.

Section Elementary Boolean Operations below shows QUBO formulation for a number of common Boolean operators.

Ising or QUBO?#

Some problems are better formulated as QUBOs, some as Ising models. The maximum independent set of the Example: Formulation by Similarity section clearly maps well to an Ising formulation, for example, as does the structural-imbalance problem solved in structural-imbalance example.

Consider both when mapping a problem and compare ease of formulation and later solution quality.

You can use the simple arithmetic mapping, \(\vc{s}=2\vc{x}-\vc{1}\), where \(\vc{1}\) is the vector all of whose components are 1, to translate between these native formulations:

Thus,

Example#

The Example Reformulation: Map Coloring section develops an objective function, in QUBO formulation,

where \(q_B\) is a variable representing blue and \(q_G\) green.

You can view this Ising formulation using the inverse of the \(\vc{s}=2\vc{x}-\vc{1}\) Ising-to-QUBO conversion. For this objective function, the variables are

The inverse conversion in scalar form, \(q_i=\frac{s_i+1}{2}\), is a simple mathematical manipulation of \(s_i=2x_i-1\), where the QUBO variables in scalar form are \(x_1,x_2=q_B,q_G\). Substituting in the objective function yields

Ocean software can automate such conversions for you:

>>> import dimod

...

>>> dimod.qubo_to_ising({('qb', 'qb'): -1, ('qg', 'qg'): -1, ('qb', 'qg'): 2})

({'qb': 0.0, 'qg': 0.0}, {('qb', 'qg'): 0.5}, -0.5)

Table 12 shows the two formulations’ identical energy functions, with minimum levels for the valid states of a single selected color, either blue (\(0,1\) in a QUBO or \(-1,1\) in an Ising model) or green (\(1,0\) or \(1,-1\)).

\(\mathbf{q_B,q_G}\) |

\(\mathbf{s_B,s_G}\) |

\(\mathbf{\text{E}(a_i, b_{i,j}; q_i)=\text{E}(h_i, j_{i,j}; s_i)}\) |

|---|---|---|

\(0,0\) |

\(-1,-1\) |

\(0\) |

\(0,1\) |

\(-1,1\) |

\(-1\) |

\(1,0\) |

\(1,-1\) |

\(-1\) |

\(1,1\) |

\(1,1\) |

\(0\) |

For example, the state \(q_B,q_G=0,1\) of the second row is represented by the QUBO penalty function,

while the equivalent state \(s_B,s_G=-1,1\) of that same row is represented by the Ising penalty function,

with the same resulting penalty.

Note that the constant \(-\frac{1}{2}\) in the Ising formulation above, which does not affect the relative energies, does not map into the formulation used to configure the D‑Wave system, and is discarded.

Further Information#

[Bar1982] shows that Ising and QUBO problems are NP-hard.

[Ber1999] discusses a broad tractable subclass of Ising problems in the context of rounding algorithms.

[Bru1967] provides a history of the Lenz-Ising model and its uses.

[Cou2009] and [Sch2009] discuss particular cases of tractable Ising problems.

[Koc2004] and related papers show that QUBOs are an effective representation for modeling and solving a variety of discrete optimization problems.

[Kol2004] characterizes binary-variables energy functions that can be minimized by graph cuts.

[Luc2013] and [Lod2020] describe Ising formulations of many NP problems.

Weighted MAX-2-SAT#

The SAT problem problem is to decide whether the literals in its clauses can be assigned values that satisfy all the clauses; i.e., produce a value of \(1\). In CNF, the SAT is satisfied only if all its clauses are satisfied.

Literal is a Boolean variable such as \(x_i\) and \(\overline{x_i}\).

Clause is a disjunction of literals such as \(x_i \vee \overline{x_j}\).

conjunctive normal form (CNF) conjoins clauses by the AND operator; i.e., (clause 1) \(\wedge\) (clause 2).

The Simple Satisfiability (SAT) Example of the Getting Started with D-Wave Solvers guide demonstrated an intuitive formulation of a small SAT as a BQM. This section provides a more methodological approach.

A 2-SAT has \(m\) clauses of 2 literals each. A MAX-2-SAT is the problem of assigning values that maximize the number of satisfied clauses (an optimization problem; a second version is to decide whether some specified number of clauses can be satisfied). Weighted MAX-SAT assigns each clause a positive weight so the violation of clause \(c\) incurs a cost \(w_c\) and the problem is to maximize the weight of satisfied clauses

where \(\ell_{ci,1}\) and \(\ell_{ci,2}\) are the two literals of clause \(ci\), and \(w_{ci}\) is its weight.

To reformulate a MAX-2-SAT problem as a QUBO, note that (1) maximizing the weight of satisfied clauses is equivalent to minimizing the weight of unsatisfied clauses, and (2) by De Morgan’s laws, \(\overline{x_i \vee x_j} = \overline{x_i} \wedge \overline{x_j}\).[8] This means that the general weighted MAX-2-SAT problem can be written as a posiform (in the context of machine learning problems, a polynomial expression that conjoins AND clauses with OR operations) that minimizes the weight of unsatisfied clauses:

For an intuitive understanding, verify with a truth table that clause \(x_1 \vee \overline{x}_2\) is unsatisfied if \(\overline{x}_1 \wedge x_2\) is true, and this conjunction is equivalent to the product of Boolean variables \(\overline{x}_1 x_2\). If weighted clause \((x_1\vee \overline{x}_2; 3)\) is unsatisfied a cost of 3 is incurred, thus the penalty representing the clause is \(3 \overline{x}_1 x_2\).

Example: Converting a Three-Variable MAX-2-SAT to a QUBO#

Consider the following weighted MAX-2-SAT on three variables, \(x_1,x_2,x_3\), with weights \(w_{c1}=3, w_{c2}=1, w_{c1}=4\), which maximizes the number of satisfied clauses on,

Obtain the QUBO representation using these steps:

Formulate as a posiform (using \(\overline{x_i \vee x_j} = \overline{x_i} \wedge \overline{x_j}\) and minimizing the weight of unsatisfied clauses as explained above):

Write negated literals \(\overline{x_i}\) as \((1-x_i)\):

In this last expression, the example weighted MAX-2-SAT has been reformulated as a QUBO in scalar notation,

where \(a_1=0, a_2=3, a_3=3\) are the linear coefficients and \(b_{1,2}=-3, b_{2,3}=-4\) the quadratic coefficients.

Further Information#

[Bon2007] generalizes the resolution rule for satisfiability to weighted MAX-SAT, which enables the simplification of some weighted MAX-2-SAT problems.

[Coo1971] and [Lev1973] identify satisfiability as the first NP-complete problem. (Finding a satisfiable assignment or proving that there is no satisfying assignment for 2-SAT can be done in polynomial time. However, 3-SAT which allows clauses of up to 3 literals is NP hard and there are problem instances which cannot be solved in polynomial time.)

[Wat2006] discusses the average-case complexity of the MAX-2SAT problem.

Non-Quadratic (Higher-Degree) Polynomials#

To reduce problems with interactions between more than pairs of variables to a QUBO, introduce and minimize over ancillary variables.

A good procedure is to select the pair of variables that is most common amongst all terms with more than pairwise interactions, and replace it with an ancillary variable in all those terms. Repeat until no more terms of 3 or more variables remain. Note that subsequent iterations may involve products of ancillary variables if these ancillary pairs are the most common amongst the terms.

An interaction involving 4 or more variables can be reduced to pairwise by sequentially introducing new variables and reducing the degree of the interaction by 1 at each step.

Below are two techniques you can use. Ocean software can automate such reduction for you:

>>> import dimod

...

>>> cubic = {('x',): -1, ('y',): 1, ('z',): 1.5, ('x', 'y'): -1, ('x', 'y', 'z'): -2}

>>> print(dimod.make_quadratic(cubic, 5, dimod.BINARY))

BinaryQuadraticModel({x: -1.0, y: 1.0, z: 1.5, z*x: 15.0},

{('x', 'y'): -1, ('x', 'z'): 5.0, ('x', 'z*x'): -10.0, ('y', 'z*x'): -2,

('z', 'z*x'): -10.0}, 0.0, 'BINARY')

Polynomial Reduction by Minimum Selection#

To reformulate a non-quadratic (higher-degree) polynomial to Ising/QUBO, substitute terms in the form of \(axyz\), where \(a\) is a real number, with one of the following quadratic terms:[9]

It is easy to verify, for example, that the equality for the negative coefficient, \(a<0\), holds. The term \(( x+y+z -2)\) is positive and non-zero only for \(x,y,z=1,1,1\), so multiplying by negative \(a\) yields the only negative value of \(w ( x+y+z -2)\), which is preserved for \(w=1\). All other states of \(x,y,z\) result in \(( x+y+z -2) \le 0\), so multiplied by \(a<0\) yield zero or positive values, which are minimized to zero by \(w=0\).

This technique of reduction by minimum selection exploits the identity,

where \(x,y,z\) are binary variables in some pseudo-binary function being minimized and \(w\) is the ancillary binary variable introduced to substitute quadratic terms for cubic terms.

Table 13 shows that the cubic and quadratic formulations are identical. In this table, column \(\mathbf{x,y,z}\) is all possible states of the \(xyz\) term and column \(\mathbf{xyz}\) is the corresponding values of the \(xyz\) term; column \(\mathbf{x+y+z-2}\) is an intermediate calculation, the right side of the equality without the ancillary variable and before maximization, shown for clarity; and column \(\mathbf{\max_w\left\{w(x+y+z-2)\right\}}\) is the final result of calculating the right side of the equality.

\(\mathbf{x,y,z}\) |

\(\mathbf{xyz}\) |

\(\mathbf{x+y+z-2}\) |

\(\mathbf{\max_w\left\{w(x+y+z-2)\right\}}\) |

|---|---|---|---|

\(0,0,0\) |

\(0\) |

\(-2\) |

\(0|_{w=0}\) |

\(0,0,1\) |

\(0\) |

\(-1\) |

\(0|_{w=0}\) |

\(0,1,0\) |

\(0\) |

\(-1\) |

\(0|_{w=0}\) |

\(0,1,1\) |

\(0\) |

\(0\) |

\(0|_{w=0,1}\) |

\(1,0,0\) |

\(0\) |

\(-1\) |

\(0|_{w=0}\) |

\(1,0,1\) |

\(0\) |

\(0\) |

\(0|_{w=0,1}\) |

\(1,1,0\) |

\(0\) |

\(0\) |

\(0|_{w=0,1}\) |

\(1,1,1\) |

\(1\) |

\(1\) |

\(1|_{w=1}\) |

Example#

This simple example solves higher-order polynomial \(-x +2y +3z +xy -2xyz\) in two ways: first, using an Ocean software, higher-order solver; second, using a quadratic solver on the reduced form.

>>> import dimod

...

>>> hubo = {('x', 'x'): -1, ('y', 'y'): 2, ('z', 'z'): 3, ('x', 'y'): 1,

... ('x', 'y', 'z'): -2}

>>> qubo = {('x', 'x'): -1, ('y', 'y'): 2, ('z', 'z'): 3, ('w', 'w'): 4,

... ('x', 'y'): 1, ('w', 'x'): -2, ('w', 'y'): -2, ('w', 'z'): -2}

>>> print(dimod.ExactPolySolver().sample_hubo(hubo).first)

Sample(sample={'x': 1, 'y': 0, 'z': 0}, energy=-1.0, num_occurrences=1)

>>> print(dimod.ExactSolver().sample_qubo(qubo).first)

Sample(sample={'w': 0, 'x': 1, 'y': 0, 'z': 0}, energy=-1.0, num_occurrences=1)

Polynomial Reduction by Substitution#

To reformulate a non-quadratic polynomial to Ising/QUBO, use this substitution to reduce a triplet interaction to pairwise,

where \(M>1\) is a penalty weight and \(P\) is some penalty function that has lowest value when \(z = x_1 x_2\). This reformulation is quadratic—the lefthand term, \(z x_3\), multiplies two variables, \(z\) and \(x_3\), and the righthand term, \(P(x_1,x_2;z)\) is a quadratic penalty function—and has lowest values for configurations of variables where \(z x_3 = x_1 x_2 x_3\).

Example Example: Boolean Variables shows such a reduction for binary-valued variables while Example: Spin Variables shows it for spin-valued variables.

Example: Boolean Variables#

This example of reduction by substitution represents the multiplication \(x_1 x_2\) as Boolean[10] constraint \(z\Leftrightarrow x_1\wedge x_2\) (AND operation) using the quadratic penalty function given in the Table 17 table of the Elementary Boolean Operations section,

The Ising version (variables with \(\pm 1\) values) of this penalty function is \(P(s_1,s_2;s_z) = 3+s_1 s_2 - 2(s_1+s_2)s_z - s_1-s_2 + 2s_z\). However, for spin-valued variables the Boolean AND operation is not equivalent to multiplication (for values \(s_1 = s_2 = -1\), for example, \(s_1s_2 = (-1) * (-1) = 1 \neq s_1 \wedge s_2\)).

Table 14 shows the equivalence between the non-quadratic \(x_1x_2x_3\) and the minimized replacement \(\min_z \bigl\{ z x_3 + M P(x_1,x_2;z) \bigr\}\) for a penalty weight of \(M=2\). In this table, column \(x_1,x_2, x_3\) is all possible states of the triplet’s variables; column \(x_1x_2x_3\) is the corresponding values of the triplet; column \(P(x_1,x_2; z)\) are the penalties for solutions that do or do not meet the constraint \(z = x_1 x_2\); column \(zx_3 + M P\) are the corresponding values of \(z x_3 + M P(x_1,x_2;z)\); and finally, column \(\min_z \bigl\{ z x_3 + M P \bigr\}\) is the minimum value of the previous column.

\(x_1,x_2,x_3\) |

\(x_1x_2x_3\) |

\(P(x_1,x_2;z)\) |

\(zx_3 + M P\) |

\(\min_z \bigl\{ z x_3 + M P \bigr\}\) |

||

|---|---|---|---|---|---|---|

\(z=x_1x_2\) |

\(z\ne x_1x_2\) |

\(z=x_1x_2\) |

\(z\ne x_1x_2\) |

|||

\(0,0,0\) |

\(0\) |

\(0\) |

\(3\) |

\(0\) |

\(6\) |

\(0\) |

\(0,0,1\) |

\(0\) |

\(0\) |

\(3\) |

\(0\) |

\(7\) |

\(0\) |

\(0,1,0\) |

\(0\) |

\(0\) |

\(1\) |

\(0\) |

\(2\) |

\(0\) |

\(0,1,1\) |

\(0\) |

\(0\) |

\(1\) |

\(0\) |

\(3\) |

\(0\) |

\(1,0,0\) |

\(0\) |

\(0\) |

\(1\) |

\(0\) |

\(2\) |

\(0\) |

\(1,0,1\) |

\(0\) |

\(0\) |

\(1\) |

\(0\) |

\(3\) |

\(0\) |

\(1,1,0\) |

\(0\) |

\(0\) |

\(1\) |

\(0\) |

\(2\) |

\(0\) |

\(1,1,1\) |

\(1\) |

\(0\) |

\(1\) |

\(1\) |

\(2\) |

\(1\) |

Example: Spin Variables#

This example of reduction by substitution represents the multiplication \(s_1 s_2\) as spin-valued constraint \(z\Leftrightarrow s_1 \odot s_2\) (where \(\odot\) is the Boolean XNOR operation)[11] using a quadratic penalty function,

with auxiliary variables \(z, a_0, a_1\).

The table below shows how the XNOR operation (a negation of the XOR operation, \(\oplus\)) is equivalent to multiplication for spin-valued variables. In this table, the lefthand column shows all possible multiplications of two spin-valued variables, the middle column shows the values of XOR for those same spin-valued variables, and the righthand column the values of XNOR.

\(\mathbf{s_1s_2}\) |

\(\mathbf{s_1 \oplus s_2}\) |

\(\mathbf{s_1 \odot s_2}\) |

|---|---|---|

\((-1) * (-1) = +1\) |

\((-1) \oplus (-1) = -1\) |

\((-1) \odot (-1) = +1\) |

\((-1) * (+1) = -1\) |

\((-1) \oplus (+1) = +1\) |

\((-1) \odot (+1) = -1\) |

\((+1) * (-1) = -1\) |

\((+1) \oplus (-1) = +1\) |

\((+1) \odot (-1) = -1\) |

\((+1) * (+1) = +1\) |

\((+1) \oplus (+1) = -1\) |

\((+1) \odot (+1) = +1\) |

Note

There are many ways to derive such a penalty function. For example, you can find a penalty function for XNOR using the dimod package as follows:

>>> from dimod.generators import xor_gate

...

>>> bqm = xor_gate('s1', 's2', 'z', 'aux')

>>> bqm.flip_variable('z')

>>> bqm_xnor = bqm.change_vartype('SPIN', inplace=False)

>>> bqm_xnor.linear

{'s1': -0.5, 's2': -0.5, 'z': -0.5, 'aux': 1.0}

Table 15 shows the equivalence between the non-quadratic \(s_1s_2s_3\) and the minimized replacement \(\min_z \bigl\{ z s_3 + M P(s_1,s_2;z, a_0, a_1) \bigr\}\) for a penalty weight of \(M=2\). In this table, column \(s_1, s_2, s_3\) is all possible states of the triplet’s variables; column \(s_1s_2s_3\) is the corresponding values of the triplet; column \(z, a_0, a_1\) shows the values of the auxiliary variables that minimize the penalty \(P(s_1,s_2;z, a_0, a_1)\); and finally, column \(\min_z \bigl\{ z x_3 + M P \bigr\}\) is the minimum value of the substitute quadratic formulation.

\(s_1,s_2,s_3\) |

\(s_1s_2s_3\) |

\(z,a_0,a_1\) |

\(\min_z \bigl\{ z x_3 + M P \bigr\}\) |

|

|---|---|---|---|---|

\(-1,-1,-1\) |

\(-1\) |

\(1, -1, -1\) |

\(-1\) |

|

\(-1,-1,1\) |

\(1\) |

\(1, -1, -1\) |

\(1\) |

|

\(-1,1,-1\) |

\(1\) |

\(-1, -1, -1\) |

\(1\) |

|

\(-1,1,1\) |

\(-1\) |

\(-1, -1, -1\) |

\(-1\) |

|

\(1,-1,-1\) |

\(1\) |

\(-1, -1, 1\) |

\(1\) |

|

\(1,-1,1\) |

\(-1\) |

\(-1, -1, 1\) |

\(-1\) |

|

\(1,1,-1\) |

\(-1\) |

\(1, 1, -1\) |

\(-1\) |

|

\(1,1,1\) |

\(1\) |

\(1, 1, -1\) |

\(1\) |

|

Example#

The Example Reformulation: Circuit Fault Diagnosis example demonstrates the technique.

Further Information#

[Bor2002] discusses posiforms as a useful representation for pseudo-Boolean functions and shows that reformulating a problem of \(N\) terms requires at most \(N\) ancillary variables.

[Dat2014] and [Tan2015] discuss tricks to quickly reduce higher-order polynomials to QUBOs in the context of factoring.

[Ish2011] discusses reduction by minimum selection and by substitution and introduces an extension of the reduction by minimum selection.

[Ohz2020] proposes an approach to dense optimization problems using the Hubbard-Stratonovich transformation to change squared terms into linear terms via the inversion of the Gaussian integral.

[Yu2021] applies [Ohz2020] to a bus charging scheduling problem.

[Wan2020] provides a triplet-reduction formulation in the context of factoring.

Constraints (Conflict-Graph Technique)#

Many real-world problems include constraints. For example, you might have a map-coloring problem that constrains adjacent states to select different colors or a delivery problem with constraints on the weights different vehicles can transport.

One standard approach of several that converts a constraint satisfaction problem (CSP) into a QUBO uses a conflict graph. Mathematically, given a set of constraints \(\mathcal{C} = \{C_\alpha(\vc{x}_\alpha)\}_{\alpha=1}^{|\mathcal{C}|}\), this reformulation seeks a solution \(\vc{x}\) that satisfies all constraints; i.e., \(\bigwedge_{C_\alpha\in\mathcal{C}} C_\alpha(\vc{x})\) is true.

Such a set of constraints could be, for example:

Exactly one of three time-slots is chosen for the delivery: \(t_1 + t_2 + t_3 = 1\)

Two trucks cannot be serviced together: \(v_1 \ne v_2\)

This technique introduces a dual variable[12], \(y_\alpha\), for each constraint \(C_\alpha\). The domain of \(y_\alpha\) is the set of feasible solutions to \(C_\alpha\). Coupling between dual variables arises from constraints \(C_\alpha\) and \(C_\beta\) having common variables in their scopes. This dual constraint formulation defines a conflict graph. The nodes of the conflict graph are the possible feasible settings for each \(y_\alpha\), and you form edges between the nodes \(y_\alpha,y_\beta\) if there is any conflicting setting of shared variables.

These are called “dual” as distinct from the ordinary variables of the problem.

If there is an independent set in the conflict graph of size \(|\mathcal{C}|\) then—because the edge constraint ensures no two elements that are in conflict are in the independent set—selecting each \(y_\alpha\) in the independent set, which defines values for all the \(X\) variables in the scope of \(C_\alpha\), satisfies the constraint problem.

The maximum independent set (MIS) is easily represented as a QUBO, as shown in Native QPU Formulations: Ising and QUBO.

Example#

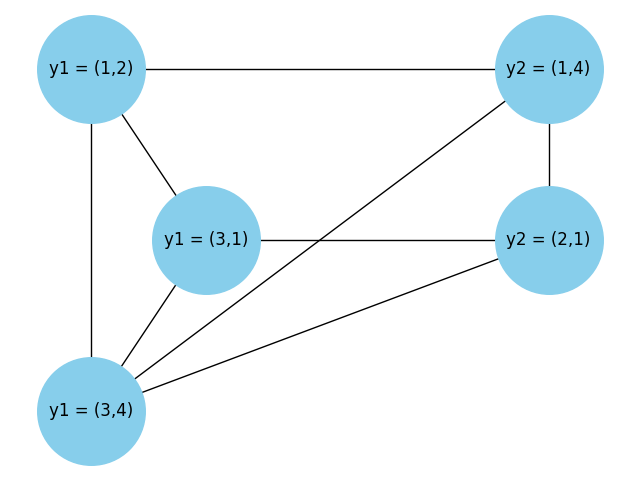

A problem has two constraints (see also the table below):

\(C_1\) has scope \(X_1,X_2\) with feasible sets \(\{(1,2),(3,1),(3,4)\}\).

\(C_2\) has scope \(X_2,X_3\) with feasible sets \(\{(1,4),(2,1)\}\).

Note

Saying a constraint “has scope” means that the constraints are on those variables; e.g., \(C_1\) constrains the values that variables \(X_1,X_2\) are allowed. The “feasible sets” for those variables are the values that satisfy the constraint; e.g., \(C_1\) allows values \(X_1=1; X_2=2\) in the problem’s solution, but a solution with values \(X_1=1; X_2=1\) breaks this constraint.

To use this method, you define dual variables \(y_1 \in \{(1,2),(3,1),(3,4)\}\) and \(y_2 \in \{(1,4),(2,1)\}\).

Notice that the two constraints share variable \(X_2\) so whatever value \(X_2\) is assigned, it must be the same value in both constraints. This means that of the possible \(y_1,y_2\) combinations only \(y_1=(1,2), y_2=(2,1)\) and \(y_1=(3,1), y_2=(1,4)\) are allowed because other combinations have conflicting values of \(X_2\).

\(C_1\) |

\(C_2\) |

||||

|---|---|---|---|---|---|

\(X_1\) |

\(X_2\) |

\(X_2\) |

\(X_3\) |

\(Y_1\) |

\(Y_2\) |

1 |

2 |

1 |

4 |

1,2 |

1,4 |

3 |

1 |

2 |

1 |

3,1 |

2,1 |

3 |

4 |

3,4 |

|||

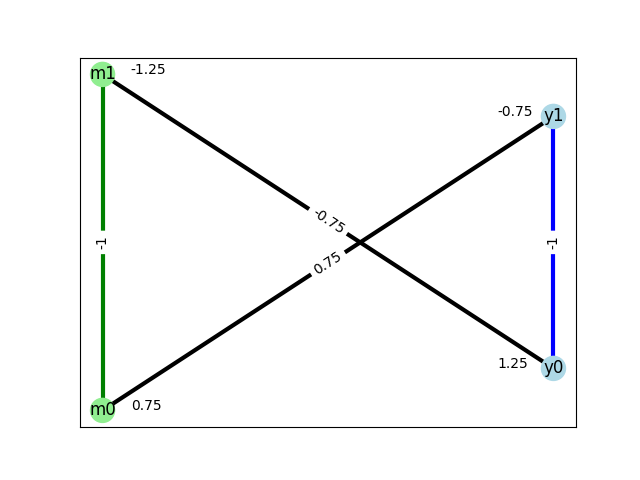

You can now create a conflict graph: nodes are the possible feasible settings for each \(y_\alpha\) and edges are drawn between nodes \(y_\alpha,y_\beta\) if there is a conflicting setting of shared variables.

The conflict graph is shown in Figure 41. Note that there is no edge between nodes \(y_1 = (1,2)\) and \(y_2 = (2,1)\), both of which have \(X_2=2\), or between \(y_1 = (3,1)\) and \(y_2 = (1,4)\), both of which have \(X_2 = 1\).

Fig. 41 Dual graph of a small CSP consisting of three variables \(X_1\), \(X_2\), and \(X_3\) each having domain \(\{1,2,3,4\}\), and two constraints \(C_1(X_1,X_2)\), \(C_2(X_2,X_3)\) having scopes \(\{X_1, X_2\}\) and \(\{X_2, X_3\}\) respectively.#

You can use the dwave_networkx package to create a QUBO from the maximum independent set of the conflict graph:

>>> import dwave_networkx as dnx

>>> import networkx as nx

>>> import dimod

...

>>> G = nx.Graph()

>>> G.add_nodes_from({"y1 = (1,2)", "y1 = (3,1)", "y1 = (3,4)", "y2 = (1,4)",

... "y2 = (2,1)"})

>>> G.add_edges_from({("y1 = (1,2)", "y1 = (3,1)"), ("y1 = (1,2)", "y1 = (3,4)"),

... ("y1 = (1,2)", "y2 = (1,4)"), ("y1 = (3,1)", "y1 = (3,4)"),

... ("y1 = (1,2)", "y1 = (3,4)"), ("y1 = (3,1)", "y2 = (2,1)"),

... ("y1 = (3,4)", "y2 = (1,4)"), ("y1 = (3,4)", "y2 = (2,1)"),

... ("y2 = (1,4)", "y2 = (2,1)")})

>>> Q = dnx.algorithms.independent_set.maximum_weighted_independent_set_qubo(G)

Solutions to the QUBO meet the constraints (\(y_1=(1,2), y_2=(2,1)\) and \(y_1=(3,1), y_2=(1,4)\)):

>>> print(dimod.ExactSolver().sample_qubo(Q).lowest())

y1 = (1,2) y1 = (3,1) y1 = (3,4) y2 = (1,4) y2 = (2,1) energy num_oc.

0 1 0 0 0 1 -2.0 1

1 0 1 0 1 0 -2.0 1

['BINARY', 2 rows, 2 samples, 5 variables]

Further Information#

Constraints: Soft/Hard (Conflict-Graph Technique)#

Many CSPs used in applications are formulated with two types of constraints:

“Hard” constraints cannot be broken in a viable solution.

“Soft” constraints incur a penalty if broken.

You can represent hard and soft constraints by choosing two weights \(w_H\) (large magnitude) and \(w_S\) (small magnitude) to assign to the respective constraints. You then use the technique of Constraints (Conflict-Graph Technique) but in the resulting graph find a maximum independent set of minimum weight: a weighted MIS.

The QUBO representation of weighted MIS for a graph \(G=(V,E)\) is simply \(\min_\vc{x} \bigl\{ \sum_v w_v x_v + M\sum_{(v,v')\in E} x_v x_{v'} \bigr\}\) where \(w_v\) is the weight of vertex \(v\).

The dwave_networkx package lets you

set a weight attribute in the example of

Constraints (Conflict-Graph Technique), as shown here:

>>> import dwave_networkx as dnx

>>> import networkx as nx

>>> import dimod

...

>>> G = nx.Graph()

>>> G.add_nodes_from(["y1 = (1,2)", "y1 = (3,1)", "y1 = (3,4)"], weight=3)

>>> G.add_nodes_from(["y2 = (1,4)", "y2 = (2,1)"], weight=1)

>>> G.add_edges_from({("y1 = (1,2)", "y1 = (3,1)"), ("y1 = (1,2)", "y1 = (3,4)"),

... ("y1 = (1,2)", "y2 = (1,4)"), ("y1 = (3,1)", "y1 = (3,4)"),

... ("y1 = (1,2)", "y1 = (3,4)"), ("y1 = (3,1)", "y2 = (2,1)"),

... ("y1 = (3,4)", "y2 = (1,4)"), ("y1 = (3,4)", "y2 = (2,1)"),

... ("y2 = (1,4)", "y2 = (2,1)")})

>>> Q = dnx.algorithms.independent_set.maximum_weighted_independent_set_qubo(G,

... weight="weight")

Best solutions to the QUBO still meet both constraints (first two rows with energy \(-1.333333\)), but notice the difference in energies for solutions that meet just the first constraint (rows three to five with energy \(-1.0\)) versus solutions that meet just the second constraint (last two rows with energy \(-0.333333\)):

>>> print(dimod.ExactSolver().sample_qubo(Q).lowest(atol=1.1))

y1 = (1,2) y1 = (3,1) y1 = (3,4) y2 = (1,4) y2 = (2,1) energy num_oc.

12 0 1 0 1 0 -1.333333 1

30 1 0 0 0 1 -1.333333 1

1 1 0 0 0 0 -1.0 1

3 0 1 0 0 0 -1.0 1

7 0 0 1 0 0 -1.0 1

15 0 0 0 1 0 -0.333333 1

31 0 0 0 0 1 -0.333333 1

['BINARY', 7 rows, 7 samples, 5 variables]

Using this technique on real-world problems with many constraints and probabilistic solvers, such as D‑Wave quantum computers, results in a higher likelihood of finding solutions that at least meet the more heavily weighted, “hard” constraints.

Constraints: Linear Equality (Penalty Functions)#

You can express \(m\) equality constraints on \(n\) Boolean variables, \(\vc{x}\), as \(\vc{C}\vc{x}=\vc{c}\), where \(\vc{C}\) is an \(m\times n\) matrix and \(\vc{c}\) an \(m\times 1\) vector. Mapping these constraints to QUBO formulation, the penalty function \(\vc{C}\vc{x}-\vc{c}=0\) is simply \(M \|\vc{C}\vc{x}-\vc{c}\|^2\). For \(k\) independently weighted constraints, the penalty function in QUBO formulation is

where \(m_k^=>0\) is the weight of the equality constraint \(k\), and \(\vc{C}_k\) is row \(k\) of \(\vc{C}\). This function is nonnegative and equal to zero for feasible \(\vc{x}\).

Example#

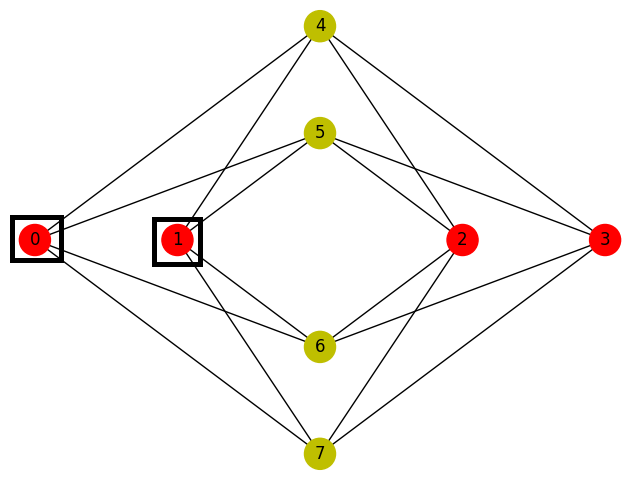

This simple example solves the problem of finding a maximum independent set (MIS), where the graph is a cell in the Chimera topology,

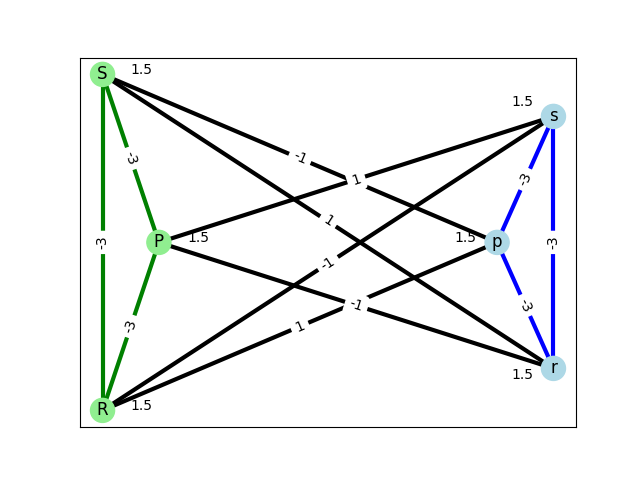

Fig. 42 A unit cell in the Chimera topology with two maximum independent sets.#

but with an added constraint that requires that either node 0 or node 1, but not both, be selected. (The example in the CSP (Inverse Verification Technique) solves it by reformulating the constraint as a Boolean gate.)

You can express this \(m=1\) constraint as \(x_0 + x_1 = 1\), an equality that holds only if either but not both of its variables \(x_0, x_1\) is 1, or in the mathematical formalism of this section, \(\vc{C}\vc{x}=\vc{c}\), as:

where the \(x_i\) variables represent the graph nodes, with a value of 1 signifying that the variable belongs to the MIS.

The penalty function for the constraint in QUBO formulation is

Dropping the constant term, which is added equally to all values of \(x_0, x_1\) and so does not favor any, you can see the penalty favors states where one and only one of the two nodes are selected:

\(x_0, x_1\) |

Penalty |

|---|---|

0, 0 |

0 |

0, 1 |

-1 |

1, 0 |

-1 |

1, 1 |

0 |

You can use the dwave_networkx package for the MIS QUBO. The code below combines the MIS and penalty QUBOs, weighting the constraint at 1.5 so the penalty for breaking this (hard) constraint is a bit higher than flipping a variable.

>>> import dwave_networkx as dnx

>>> import dimod

...

>>> # Create a BQM for the MIS

>>> G = dnx.chimera_graph(1, 1, 4)

>>> Q_mis = dnx.algorithms.independent_set.maximum_weighted_independent_set_qubo(G)

>>> bqm = dimod.BQM.from_qubo(Q_mis)

>>> # Create a BQM for the constraint

>>> Q_constraint = {(0, 0): -1, (1, 1): -1, (0, 1): 2}

>>> bqm_constraint = dimod.BQM.from_qubo(Q_constraint)

>>> bqm_constraint.scale(1.5)

>>> # Create a combined BQM

>>> bqm.update(bqm_constraint)

>>> # Print the best solutions

>>> print(dimod.ExactSolver().sample(bqm).lowest(atol=0.8))

0 1 2 3 4 5 6 7 energy num_oc.

1 1 0 1 1 0 0 0 0 -4.5 1

3 0 1 1 1 0 0 0 0 -4.5 1

0 0 0 0 0 1 1 1 1 -4.0 1

2 1 1 1 1 0 0 0 0 -4.0 1

['BINARY', 4 rows, 4 samples, 8 variables]

The two best solutions meet the constrained MIS problem while the next two, with higher energy, solve the unconstrained MIS problem.

Further Information#

[Che2021] compares performance of a one-hot constraint and a wall-domain formulation.

[Dec2003] discusses constraint programming as a declarative framework for modeling problems that require solutions to satisfy a number of constraints.

[Ven2015b] discusses the use of penalties versus rewards for constraints.

[Yur2022] introduces a hybrid classical-quantum framework based on the Frank-Wolfe algorithm for solving quadratic, linearly-constrained, binary optimization problems.

Constraints: Linear Inequality (Penalty Functions)#

For inequality constraints of the form \(\vc{D}\vc{x}\le \vc{d}\), which are slightly more complex than linear equality constraints you can reduce the inequalities to equalities by introducing slack variables.

The inequality constraint \(\ip{\vc{D}_i}{\vc{x}}-d_i \le 0\), where \(\vc{D}_i\) is row \(i\) of \(\vc{D}\), can be rewritten as the equality \(\ip{\vc{D}_i}{\vc{x}}-d_i +\xi_i = 0\) by introducing a nonnegative slack variable \(\xi_i\ge 0\).

The slack variable may need to take a value as large as \(d_i-\min_{\vc{x}} \ip{\vc{D}_i}{\vc{x}} = d_i-\sum_j \min(D_{i,j},0)\). This slack quantity may be naively represented with \(\lceil d_i-\sum_j \min(D_{i,j},0) \rceil\) bits (i.e., to represent slack quantities up to 5, you can use 5 bits, with one bit representing 1, one bit 2, etc). However, you can also use a more concise representation, \(\xi_i = \ip{\vc{2}}{\vc{a}_i}\) where \(\vc{a}_i\) is the binary representation of \(\xi_i\), and \(\vc{2}\) is a vector whose elements are the powers of 2. This binary representation enforces the requirement that \(\xi_i\ge 0\).

Inequality \(i\) may be enforced with the penalty \(\bigl(\ip{\vc{D}_i}{\vc{x}}-d_i + \ip{\vc{2}}{\vc{a}_i}\bigr)^2\) and all constraints can be represented by summing individual inequality penalties with independent weights \(m_i^\le\) as

where \(\vc{a}\) is the vector collecting all binary slack variables.

dimod can automate such reduction

for you. For example, the

add_linear_inequality_constraint()

method below adds a constraint, \(1 \le \sum_{i=0}^{1} x_i \le 2\), that at

least one of its two binary variables \(x_0, x_1\) take value one in the

best solution.

>>> import dimod

...

>>> bqm = dimod.BinaryQuadraticModel("BINARY")

>>> slacks = bqm.add_linear_inequality_constraint([(f"x{i}", 1) for i in range(2)],

... lagrange_multiplier=1, label="min1", lb=1, ub=2)

Example#

The example of Constraints: Linear Equality (Penalty Functions) solved the problem of finding a maximum independent set (MIS), where the graph is a cell in the Chimera topology, with an equality constraint, \(x_0 + x_1 = 1\), which requires that either node 0 or node 1, but not both, be selected. Because one of those two nodes had to be selected, the equality constraint forces a selection of the MIS \(0/1, 2, 3\) (either 0 or 1, and both of 2 and 3) and not the alternative MIS \(4, 5, 6, 7\).

This example uses \(m=2\) inequality constraints to select either one of two nodes or neither:

\(x_0 + x_1 \leq 1\)

\(x_4 + x_5 \leq 1\)

With the above two inequality constraints the constrained MIS can be either \(0/1, 2, 3\) or \(4/5, 6, 7\).

You can now use slack variables to transform the inequalities into equalities:

where slack variables \(a_0, a_1\) can take values 0 or 1.

Table 16 shows that a relative penalty is applied to the case of \(x_0 = x_1 = 1\) or \(x_4 + x_5 = 1\) when this penalty function is minimized (when this function is part of a BQM being minimized, its value is lowest for at least one of the possible values of ancillary variable \(a_0\) or \(a_1\)). In this table, column \(\vc{x}\) is all possible states of \(x_0,x_1\) or \(x_4, x_5\); column \(\mathbf{P_{a=0}}\) and \(\mathbf{P_{a=1}}\) are the values of the penalty function if the ancillary variable \(a_0/a_1=0\) or \(a_0/a_1=1\) respectively; and the final column, \(\mathbf{\min_{a}P}\), is the value the penalty function adds to the minimized BQM (the minimum penalty of \(\mathbf{P_{a=0}}\) or \(\mathbf{P_{a=1}}\)).

\(\vc{x}\) |

\(\mathbf{P_{a=0}}\) |

\(\mathbf{P_{a=1}}\) |

\(\mathbf{\min_{a}P}\) |

|---|---|---|---|

\(0,0\) |

\(0\) |

\(-1\) |

\(-1\) |

\(0,1\) |

\(-1\) |

\(0\) |

\(-1\) |

\(1,0\) |

\(-1\) |

\(0\) |

\(-1\) |

\(1,1\) |

\(0\) |

\(3\) |

\(0\) |

As in the previous section, you can use the dwave_networkx package for the MIS QUBO. The code below combines the MIS and penalty QUBOs, weighting the constraint at 1.5 so the penalty for breaking these (hard) constraints is a bit higher than flipping a variable.

>>> import dwave_networkx as dnx

>>> import dimod

...

>>> # Create a BQM for the MIS

>>> G = dnx.chimera_graph(1, 1, 4)

>>> Q_mis = dnx.algorithms.independent_set.maximum_weighted_independent_set_qubo(G)

>>> bqm = dimod.BQM.from_qubo(Q_mis)

>>> # Create BQMs for the constraints

>>> Q_constraint1 = {(0, 0): -1, (1, 1): -1, ('a0', 'a0'): -1,

... (0, 1): 2, (0, 'a0'): 2, (1, 'a0'): 2}

>>> Q_constraint2 = {(4, 4): -1, (5, 5): -1, ('a1', 'a1'): -1,

... (4, 5): 2, (4, 'a1'): 2, (5, 'a1'): 2}

>>> bqm_constraint1 = dimod.BQM.from_qubo(Q_constraint1)

>>> bqm_constraint1.scale(1.5)

>>> bqm_constraint2 = dimod.BQM.from_qubo(Q_constraint2)

>>> bqm_constraint2.scale(1.5)

>>> # Create a combined BQM

>>> bqm.update(bqm_constraint1)

>>> bqm.update(bqm_constraint2)

>>> # Print the best solutions

>>> print(dimod.ExactSolver().sample(bqm).lowest(atol=0.8))

0 4 5 6 7 1 2 3 a0 a1 energy num_oc.

0 0 0 1 1 1 0 0 0 1 0 -6.0 1

2 0 1 0 1 1 0 0 0 1 0 -6.0 1

3 0 0 0 0 0 1 1 1 0 1 -6.0 1

5 1 0 0 0 0 0 1 1 0 1 -6.0 1

1 0 1 1 1 1 0 0 0 1 0 -5.5 1

4 1 0 0 0 0 1 1 1 0 1 -5.5 1

['BINARY', 6 rows, 6 samples, 10 variables]

The four best solutions meet the constrained MIS problem,

{5, 6, 7} selects 5, not 4, and neither 0 nor 1

{4, 6, 7} selects 4, not 5, and neither 0 nor 1

{1, 2, 3} selects 1, not 0, and neither 4 nor 5

{0, 2, 3} selects 0, not 1, and neither 4 nor 5

while the next two, with higher energy, solve the unconstrained MIS problem.

Constraints: Nonlinear (Penalty Functions)#

The previous sections show how penalty functions for equality and inequality constraints may be constructed, with inequality constraints requiring ancillary variables.

Given sets of feasible \(F\) and infeasible \(\overline{F}\) configurations, and a requirement that \(\min_{\vc{a}} P(\vc{x},\vc{a}) = o\) for \(\mathbf{x}\in F\) and \(\min_{\vc{a}} P(\mathbf{x},\vc{a}) \ge o+1\) for \(\mathbf{x}\in \overline{F}\) for some constant \(o\) representing the objective value of feasible configurations, you can formulate a quadratic penalty as

with

For Boolean indicator variables \(\{\alpha_k(j)\}\) for each \(1 \le j \le |F|\) and for each allowed configuration \(\vc{a}_k\) you then have

To break the coupling of \(\alpha_k(j)\) to \(\vc{w}^{x,a}\) and \(\vc{w}^{a,a}\) and obtain a linear problem, introduce \(\vc{v}_k^{x,a}(j) = \alpha_k(j) \vc{w}^{x,a}\) and \(\vc{v}_k^{a,a}(j) = \alpha_k(j) \vc{w}^{a,a}\). This requirement is enforced with

The resulting mixed integer program can then be solved with any MIP solver. If there is no feasible solution for a given number of ancillary variables or specified connectivity, then these requirements must be relaxed by introducing additional ancillary variables of additional connectivity.

Further Information#

[Qui2021] transforms to QUBOs the constrained combinatorial optimization (COPT) problem of maximum k-colorable subgraph (MkCS) with its linear constraints stated as (1) linear constraints and (2) nonlinear equality constraints.

CSP (Inverse Verification Technique)#

One approach to solving a constraint satisfaction problem (CSP), \(C(\vc{x}) \equiv \bigwedge_{\alpha=1}^{|\mathcal{C}|} C_\alpha(\vc{x}_\alpha)\), exploits the fact that NP-hard decision problems can be verified in polynomial time. If the CSP corresponding to \(C(\vc{x})\) is in NP, you can write the output \(z \Leftrightarrow C(\vc{x})\) as a Boolean circuit whose size is polynomial in the number of Boolean input variables. The Boolean circuit \(z\Leftrightarrow C(\vc{x})\) can be converted to an optimization objective using Boolean operations formulated as QUBO penalty functions, with each gate contributing the corresponding penalty function—see Elementary Boolean Operations.

The resulting optimization objective—which also has a polynomial number of variables and at a feasible solution \(\vc{x}^\star\), \(z_\star=1\) evaluates to 0—may be run in reverse to infer inputs from the desired true output.

To accomplish this, clamp the output of the circuit to \(z=1\) by adding \(-Mz\) (for sufficiently large \(M\)) to the objective, and then minimize with respect to all variables.[13] If you obtain a solution for which the objective evaluates to 0, you have a feasible solution to the CSP.

Alternatively, substitute \(z=1\) into the objective and eliminate \(z\) from the problem.

Example#

The example of Constraints: Linear Equality (Penalty Functions) solved the problem of finding a maximum independent set (MIS), where the graph is a cell in the Chimera topology, with an equality constraint, \(x_0 + x_1 = 1\), which requires that either node 0 or node 1, but not both, be selected. (Because one of those two nodes has to be selected, the equality constraint forces a selection of the constrained MIS \(0, 2, 3\) or \(1, 2, 3\) and not the unconstrained MIS \(4, 5, 6, 7\).) That example converted the constraint into a QUBO by squaring the subtraction of the equation’s right side from the left.

This example solves the same problem but converts the constraint into a Boolean gate, in this case, the XOR gate.

You can mathematically formulate a QUBO for the XOR gate as shown in Elementary Boolean Operations; this example use the dimod package for the XOR QUBO. The code below combines the MIS and penalty QUBOs, weighting the XOR constraint at 1.5 so the penalty for breaking this (hard) constraint is a bit higher than flipping a variable, and setting \(z\) to a negative value substantially lower than other linear biases.

>>> import dwave_networkx as dnx

>>> import dimod

...

>>> from dimod.generators import xor_gate

>>> # Create a BQM for the MIS

>>> G = dnx.chimera_graph(1, 1, 4)

>>> Q_mis = dnx.algorithms.independent_set.maximum_weighted_independent_set_qubo(G)

>>> bqm = dimod.BQM.from_qubo(Q_mis)

>>> # Create a BQM for the constraint

>>> bqm_xor = xor_gate(0, 1, 'z', 'aux')

>>> bqm_xor.scale(1.5)

>>> # Fix z to 1

>>> Q_fix = {('z', 'z'): -3}

>>> bqm_fix = dimod.BQM.from_qubo(Q_fix)

>>> # Create a combined BQM

>>> bqm.update(bqm_xor)

>>> bqm.update(bqm_fix)

>>> # Print the best solutions

>>> print(dimod.ExactSolver().sample(bqm).lowest(atol=0.8))

0 4 5 6 7 1 2 3 z aux0 aux1 energy num_oc.

0 1 0 0 0 0 0 1 1 1 0 0 -6.0 1

1 0 0 0 0 0 1 1 1 1 0 1 -6.0 1

['BINARY', 2 rows, 2 samples, 11 variables]

The two best solutions meet the constrained MIS problem

Further Information#

The dwave-examples Factoring example uses this technique to solve a factoring problem as QUBOs. It shows that this formulation yields low requirements on both connectivity and precision constraints, making it suitable for the D‑Wave system.

The Example Reformulation: Circuit Fault Diagnosis example may also benefit from this technique.

Elementary Boolean Operations#

As demonstrated in the CSP (Inverse Verification Technique) section, an often useful approach to consider it to reformulate your problem using BQMs derived from Boolean operations.

Table 17 shows several constraints useful in building Boolean circuits.

Constraint |

Penalty[14] |

|---|---|

\(z \Leftrightarrow \neg x\) |

\(2xz-x-z+1\) |

\(z \Leftrightarrow x_1 \vee x_2\) |

\(x_1 x_2 + (x_1+x_2)(1-2z) + z\) |

\(z \Leftrightarrow x_1 \wedge x_2\) |

\(x_1 x_2 - 2(x_1+x_2)z +3z\) |

\(z \Leftrightarrow x_1 \oplus x_2\) |

\(2x_1x_2 -2(x_1+x_2)z - 4(x_1+x_2)a +4az +x_1+x_2+z + 4a\) |

The XOR operator, \(\oplus\), requires the introduction of a single ancillary variable \(a\).

Example: OR Gate as a Penalty#

This example shows how the Boolean OR formulation given in the Table 18 table works.

In Table 18, column \(\mathbf{x_1,x_2}\) is all possible states of the gate’s inputs; column \(\mathbf{x_1 \vee x_2}\) is the corresponding output values of a functioning gate; column \(\mathbf{P(z=x_1 \vee x_2)}\) is the value the penalty function adds to the energy of the objective function when the gate is functioning (zero, a functioning gate must not be penalized) while column \(\mathbf{P(z \ne x_1 \vee x_2)}\) is the value the penalty function adds when the gate is malfunctioning (nonzero, the objective function must be penalized with a higher energy).

\(\mathbf{x_1,x_2}\) |

\(\mathbf{x_1 \vee x_2}\) |

\(\mathbf{P(z=x_1 \vee x_2)}\) |

\(\mathbf{P(z \ne x_1 \vee x_2)}\) |

|---|---|---|---|

\(0,0\) |

\(0\) |

\(0\) |

\(1\) |

\(0,1\) |

\(1\) |

\(0\) |

\(1\) |

\(1,0\) |

\(1\) |

\(0\) |

\(1\) |

\(1,1\) |

\(1\) |

\(0\) |

\(3\) |

For example, for the state \(x_1, x_2=1,1\) of the fourth row, when \(z=x_1 \vee x_2 = 1\),

not penalizing the valid solution, whereas for \(z \ne x_1 \vee x_2 = 0\),

adding an energy cost of \(3\) to the solution that violates the constraint.

Examples#

Section Native QPU Formulations: Ising and QUBO above derives a QUBO for a NOT gate as a constraint.

The Example Reformulation: Circuit Fault Diagnosis example derives AND and XOR gates.

The Non-Quadratic (Higher-Degree) Polynomials also derives an AND gate.

Further Information#

[Jue2016] provides numerous Boolean operations in the Ising model. These can be converted to QUBO penalty functions as shown in Native QPU Formulations: Ising and QUBO.

Example Reformulation: Map Coloring#

The map-coloring problem is to assign a color to each region of a map such that any two regions sharing a border have different colors.

Fig. 43 Coloring a map of Canada with four colors.#

A solution to a map-coloring problem for a map of Canada with four colors is shown in Figure 43.

This example is intended to demonstrate the use of the reformulations

techniques of this chapter. In practice, you could simply use the

min_vertex_color() function to solve

the problem. Ocean software documentation

demonstrates solutions to the map-coloring problem in the

Large Map Coloring and

Map Coloring: Hybrid DQM Sampler

examples. The dwave-examples GitHub

repo also demonstrates solutions to the map-coloring problem.

[Dwave4] describes this procedure.

Step 1: State the Objectives and Constraints#

As noted in the General Guidance on Reformulating section, the first step in solving a problem is to state objectives and constraints. For this problem, the colors selected must meet two constraints:

Each region is assigned one color only, of \(C\) possible colors.

The color assigned to one region cannot be assigned to adjacent regions.

Any solution that meets both constraints is a good solution. Because this problem has no preferences among such good solutions, it has no objective, just constraints. (For a map problem that ranks colors—for example, it prefers to color regions in yellow rather than green—you would also formulate an objective that minimizes the use of the least preferred colors.)

Step 2: Select Variables#

One approach to mathematically stating this problem is to think of the regions as variables representing the possible set of colors. What type of values should these variables represent?

To represent a set of values such as [blue, green, red, yellow] you

can use various numerical schemes: integer, discrete, and binary variables.

The Map Coloring: Hybrid DQM Sampler

example uses discrete variables and the DQM solver in the Leap service; this

example uses binary variables to enable solution directly on a QPU solver.

More specifically, this example maps the \(C\) possible colors to a unary encoding: represent each region by \(C\) binary variables, one for each possible color, which is set to value \(1\) if selected, while the remaining \(C-1\) variables are \(0\). These are known as one-hot variables.

Table 19 shows a unary encoding for representing colors for a map with \(C=4\) possible colors, where \(q_B\) is a variable representing blue, \(q_G\) green, \(q_R\) red, and \(q_Y\) yellow.

Color |

Unary Encoding |

|---|---|

Blue |

\(q_B,q_G,q_R,q_Y = 1,0,0,0\) |

Green |

\(q_B,q_G,q_R,q_Y = 0,1,0,0\) |

Red |

\(q_B,q_G,q_R,q_Y = 0,0,1,0\) |

Yellow |

\(q_B,q_G,q_R,q_Y = 0,0,0,1\) |

Step 3: Formulate Constraints (Constraint 1)#

The first constraint is that each region is assigned one color only, of \(C\) possible colors. It is formulated here using the truth-table approach of the Native QPU Formulations: Ising and QUBO section.

Simplify the problem by first considering a two-color problem. Given \(C=2\) colors that are unary encoded as \(q_B,q_G\), the constraint is reduced to feasible states being either blue selected, \(q_B,q_G=1,0\), or green selected, \(q_B,q_G=0,1\), but not both or neither selected.

Try to fit this simple constraint to the QUBO format for two variables:

Table 20 shows all the possible states for a single-region, two-color problem. In this table, columns \(\mathbf{q_B}\) and \(\mathbf{q_G}\) are all possible states of the two variables encoding the region’s two colors; column Constraint shows whether or not the state of the variables meet the constraint that one color is selected; column \(\mathbf{\text{E}(a_i, b_{i,j}; q_i)}\) is the QUBO’s energy for each state.

\(\mathbf{q_B}\) |

\(\mathbf{q_G}\) |

Constraint |

\(\mathbf{\text{E}(a_i, b_{i,j}; q_i)}\) |

|---|---|---|---|

0 |

0 |

Violates |

\(0\) |

0 |

1 |

Meets |

\(a_G\) |

1 |

0 |

Meets |

\(a_B\) |

1 |

1 |

Violates |

\(a_B+a_G+b_{B,G}\) |

QUBO coefficients to represent this constraint can be guessed from looking at Table 20 and the following considerations:

Both colors are equally preferred, so setting \(a_B=a_G\) ensures identical energy for both states that meet the constraint (\(q_B, q_G=0,1\) and \(q_B, q_G=1,0\)).

The state \(q_B, q_G=0,0\), which violates the constraint, has energy \(0\), so to ensure that the non-violating states have lower energy, set \(a_B = a_G < 0\).

Having set the minimum energy to negative for the valid states, setting \(a_B+a_G+b_{B,G}=0\) for non-valid state \(q_B, q_G=1,1\) ensures that state has a higher energy.

One solution[15] is to set \(a_B=a_G=-1\) and \(b_{B,G}=2\). Setting these values for the linear and quadratic coefficients sets the minimum energy for this QUBO to \(-1\) for both valid states and zero for the states that violate the constraint; that is, this QUBO has lowest energy if just one color is selected (only one variable is \(1\)), where either color (variable \(q_B\) or \(q_G\)) has an equal probability of selection.

Getting Started with D-Wave Solvers discusses the effects of different choices of values in the section on problem scaling. See also the considerations discussed in the Imprecision of Biases section.

The two-color formulation above can be easily expanded to a three-color problem: \(C=3\) possible colors are encoded as \(q_B,q_G,q_R\). The constraint to minimize in QUBO format is

Coefficients that minimize the QUBO can be guessed by applying the previous considerations more generically. All colors are equally preferred, so set \(a_i=a\) to ensure identical energy for states that meet the constraint. Simplify by setting \(b_{i,j}=b=2\) and \(a=-1\) for a QUBO,

Table 21 enumerates all states of the constraint for three possible colors. The meanings of the columns in this table are identical to those for Table 20 above.

\(\mathbf{q_B,q_G,q_R}\) |

Constraint |

\(\mathbf{\text{E}(a=-1, b=2; q_i)}\) |

|---|---|---|

0,0,0 |

Violates |

\(-(0) +2(0)=0\) |

0,0,1 |

Meets |

\(-(1) + 2(0)=-1\) |

0,1,0 |

Meets |

\(-(1) + 2(0)=-1\) |

0,1,1 |

Violates |

\(-(2) + 2(1)=0\) |

1,0,0 |

Meets |

\(-(1) + 2(0)=-1\) |

1,0,1 |

Violates |

\(-(2) + 2(1)=0\) |

1,1,0 |

Violates |

\(-(2) + 2(1)=0\) |

1,1,1 |

Violates |

\(-(3) + 2(3)=3\) |

Again, the minimum energy for this QUBO is \(-1\) for valid states and higher for states that violate the constraint; that is, this QUBO has lowest energy if just one color is selected (one variable is \(1\)), where the three colors (variables) have equal probability of selection.

For the four-color map problem, the one-color-per-region constraint can likewise be simplified to a QUBO objective, with \(b_{i,j}=b=2\) and \(a_i=a=-1\),

Step 3: Formulate Constraints (Constraint 2)#

The second constraint is that a color assigned to one region cannot be assigned to adjacent regions.

What degree is such a constraint’s formulation likely to have? Because the constraint acts between variable pairs representing adjacent regions, you can expect that relationships between variables are reducible to quadratic. That is, this constraint too should fit a QUBO format.

Such a QUBO should have a highest energy (a penalty) when the same colors are selected for adjacent regions. That is, if blue is selected on one side of a shared border, for example, the QUBO has higher energy if blue is also selected on the other side.

Similar to the previous constraint, start with a simplified problem of two variables: the variable for green in region \(r1\), \(q_{G}^{r1}\), and for green in adjacent region \(r2\), \(q_{G}^{r2}\). The constraint is that these variables (representing color green in adjacent regions \(r1\) and \(r2\)) not have the same value (True for both regions).

Table 22 shows all the possible states for a problem with a single color, \(c\), and two adjacent regions, \(r1, r2\). The meanings of the columns in this table are identical to those for Table 20 above.

\(\mathbf{q_c^{r1}}\) |

\(\mathbf{q_c^{r2}}\) |

Constraint |

\(\mathbf{\text{E}(a_i, b_{i,j}; q_i)}\) |

|---|---|---|---|

0 |

0 |

Meets |

\(0\) |

0 |

1 |

Meets |

\(a_c^{r2}\) |

1 |

0 |

Meets |

\(a_c^{r1}\) |

1 |

1 |

Violates |

\(a_c^{r1}+a_c^{r2}+b_c^{r1,r2}\) |

Coefficients to represent this constraint in the two-variable QUBO,

can be derived from the following considerations:

The state \(q_c^{r1}, q_c^{r2}=0,0\), which meets the constraint, has energy \(0\), so to ensure that other non-violating states have similar low energy, set \(a_c^{r1} = a_c^{r2} = 0\).

Having set the minimum energy to zero for the valid states, requiring \(a_c^{r1}+a_c^{r2}+b_c^{r1, r2}>0\) for non-valid state \(q_c^{r1}, q_c^{r2}=1,1\) ensures that state has a higher energy. It also means that \(b_c^{r1, r2}\) must be positive.

Configuring \(a^{r1}_{c} = a^{r2}_{c} = 0\) and \(b_{c}^{r1,r2} = 1\) sets a higher energy for the state \(q_{c}^{r1} = q_c^{r2}\) (color \(c\) selected in both regions), penalizing that invalid state, while leaving the valid three states with an energy of \(0\).

The sum of QUBOs for both types of constraints has lowest energy when simultaneously each region is assigned a single color and adjacent regions do not select the same colors.

Example Reformulation: Circuit Fault Diagnosis#

Fault diagnosis is the combinational problem of quickly localizing failures as soon as they are detected in systems. Circuit fault diagnosis (CFD) is the problem of identifying a minimum-sized set of gates that, if faulty, explains an observation of incorrect outputs given a set of inputs.

This example is intended to demonstrate the use of the reformulations techniques of this chapter. In practice, you could use Ocean software’s Boolean constraints as in the Multiple-Gate Circuit example.

A Short Digression: An Intuitive Explanation of the CFD Solution#

To understand the relationship between the CFD solutions and low-energy configurations, it’s helpful to look at an even smaller example.

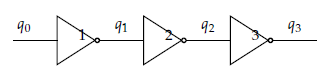

Fig. 45 The circuit in Figure 45 has three NOT gates in series, with an input \(q_0\), an output \(q_3\), and intermediate input/outputs \(q_1\) and \(q_2\).#

Table 23 shows energy levels for all possible circuit states with an incorrect output for an objective function that penalizes by a value of \(1\) any malfunctioning gate.

In this table, the first column, Circuit Input (\(\mathbf{q_0}\)), is the binary input value applied to the circuit in testing, and the second column, Incorrect Output (\(\mathbf{q_3}\)), is the measured output of the circuit with an error detected. Columns \(\mathbf{q_1}\) and \(\mathbf{q_2}\) are all possible intermediate input/outputs of the circuit’s gates. Column Faulty Gate shows which gates are malfunctioning given the input and output states. Column Energy is the energy level of the objective function.

Circuit Input (\(\mathbf{q_0}\)) |

Incorrect Output (\(\mathbf{q_3}\)) |

\(\mathbf{q_1}\) |

\(\mathbf{q_2}\) |

Faulty Gate |

Energy |

|---|---|---|---|---|---|

\(0\) |

\(0\) |

\(0\) |

\(0\) |

1,2,3 |

\(3\) |

\(0\) |

\(1\) |

1 |

\(1\) |

||

\(1\) |

\(0\) |

3 |

\(1\) |

||

\(1\) |

\(1\) |

2 |

\(1\) |

||

\(1\) |

\(1\) |

\(0\) |

\(0\) |

2 |

\(1\) |

\(0\) |

\(1\) |

3 |

\(1\) |

||

\(1\) |

\(0\) |

1 |

\(1\) |

||

\(1\) |

\(1\) |

1,2,3 |

\(3\) |

The minimum-sized set of gates that, if faulty, explains the observation of incorrect outputs given a set of inputs, are the single malfunctioning gates of rows 2–7. These have the lowest non-zero energy for the serial NOT circuit.

Step 1: State the Objectives and Constraints#

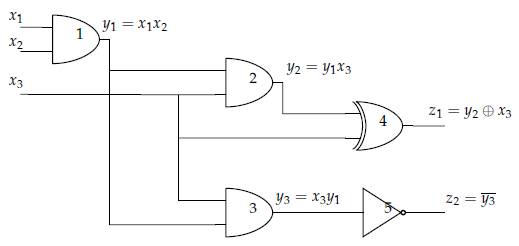

As noted in the General Guidance on Reformulating section, the first step in solving a problem is to state objectives and constraints. For this problem, the Boolean gates constrain outputs based on gates’ inputs; for example, the output of AND gate 1 should be \(x_1 \wedge x_2\).

The objective to be minimized is the number of faulty gates given the circuit inputs and outputs.

Good solution are those that violate the fewest of the constraints representing the circuits gates while still producing the tested inputs and outputs.

Step 2: Select Variables#

The approach used in this example is to represent gate inputs and outputs with variables—the circuit is binary and so, naturally, are the variables.

Step 3: Formulate Constraints#

This example maps the circuit’s gates to penalty functions using the formulations of the Elementary Boolean Operations section. These penalty functions increase the energy level of the problem’s objective function by penalizing non-valid states of the Boolean operations, which represent malfunctioning gates.

The NOT gate

NOT can be represented as penalty function

\[z \Leftrightarrow \neg x: \qquad 2xz-x-z+1.\]Table 24 shows that this function penalizes states that represent a malfunctioning gate while no penalty is applied to a functioning gate. In this table, column in is all possible states of the gate’s inputs; column \(\mathbf{out_{valid}}\) is the corresponding output values of a functioning gate while \(\mathbf{out_{fault}}\) is the corresponding output values of a malfunctioning gate, which are simply \(out_{fault} = \neg out_{valid}\); column \(\mathbf{P_{valid}}\) is the value the penalty function adds to the energy of the objective function when the gate is functioning (zero because a functioning gate must not be penalized) while column \(\mathbf{P_{fault}}\) is the value the penalty function adds when the gate is malfunctioning (nonzero, the objective function must be penalized with a higher energy).

Table 24 Boolean NOT Operation as a Penalty.# in

\(\mathbf{out_{valid}}\)

\(\mathbf{out_{fault}}\)

\(\mathbf{P_{valid}}\)

\(\mathbf{P_{fault}}\)

\(0\)

\(1\)

\(0\)

\(0\)

\(1\)

\(1\)

\(0\)

\(1\)

\(0\)

\(1\)

For example, the state \(in, out_{valid}=0,1\) of the first row is represented by the penalty function with \(x=0\) and \(z = 1 = \neg x\). For this functioning gate, the value of \(P_{valid}\) is

\[2xz-x-z+1 = 2 \times 0 \times 1 - 0 - 1 + 1 = -1+1=0,\]not penalizing the valid configuration. In contrast, the state \(in, out_{fault}=0,0\) of the first row is represented by the penalty function with \(x=0\) and \(z = 0 \ne \neg x\). For this malfunctioning gate, the value of \(P_{fault}\) is

\[2xz-x-z+1 = 2 \times 0 \times 0 -0 -0 +1 =1,\]adding an energy cost of \(1\) to the incorrect configuration.

The AND gate

AND can be represented as penalty function

\[z \Leftrightarrow x_1 \wedge x_2: \qquad x_1 x_2 - 2(x_1+x_2)z +3z.\]Table 25 shows that this function penalizes states that represent a malfunctioning gate while no penalty is applied to a functioning gate. The meanings of the columns in this table are identical to those for the NOT gate above.

Table 25 Boolean AND Operation as a Penalty.# in

\(\mathbf{out_{valid}}\)

\(\mathbf{out_{fault}}\)

\(\mathbf{P_{valid}}\)

\(\mathbf{P_{fault}}\)

\(0,0\)

\(0\)

\(1\)

\(0\)

\(3\)

\(0,1\)

\(0\)

\(1\)

\(0\)

\(1\)

\(1,0\)

\(0\)

\(1\)

\(0\)

\(1\)

\(1,1\)

\(1\)

\(0\)

\(0\)

\(1\)

The XOR gate

XOR can be represented as penalty function

\[z \Leftrightarrow x_1 \oplus x_2: \qquad 2x_1x_2 - 2(x_1+x_2)z - 4(x_1+x_2)a + 4az + x_1 + x_2 + z + 4a,\]where \(a\) is an ancillary variable as described in the Non-Quadratic (Higher-Degree) Polynomials section (and shown below).

Table 26 shows that no penalty is applied to a functioning gate when this penalty function is minimized (when this function is part of an objective function being minimized, its value is zero for at least one of the possible values of ancillary variable \(a\)). In this table, column in is all possible states of the gate’s inputs \(x_1,x_2\); column \(\mathbf{out}\) is the corresponding output values of a functioning gate \(x_1 \oplus x_2\); columns \(\mathbf{P_{a=0}}\) and \(\mathbf{P_{a=1}}\) are the values of the penalty function if the ancillary variable \(a=0\) or \(a=1\) respectively; and the final column, \(\mathbf{\min_{a}P}\), is the value the penalty function adds to the minimized objective function (the minimum penalty of \(\mathbf{P_{a=0}}\) or \(\mathbf{P_{a=1}}\)).

Table 26 Boolean XOR Operation as a Penalty: Functioning Gate.# in

\(\mathbf{out}\)

\(\mathbf{P_{a=0}}\)

\(\mathbf{P_{a=1}}\)

\(\mathbf{\min_{a}P}\)

\(0,0\)

\(0\)

\(0\)

\(4\)

\(0\)

\(0,1\)

\(1\)

\(0\)

\(4\)

\(0\)

\(1,0\)

\(1\)

\(0\)

\(4\)

\(0\)

\(1,1\)

\(0\)

\(4\)

\(0\)

\(0\)

Table 27 shows that a non-zero penalty is applied to a malfunctioning gate. In this table, column \(\mathbf{out}\) is the output values of a malfunctioning gate \(out = \neg (x_1 \oplus x_2)\); the other columns are identical to those for Table 26 above.

Table 27 Boolean XOR Operation as a Penalty: Malfunctioning Gate.# in

\(\mathbf{out}\)

\(\mathbf{P_{a=0}}\)

\(\mathbf{P_{a=1}}\)

\(\mathbf{\min_{a}P}\)

\(0,0\)

\(1\)

\(1\)

\(9\)

\(1\)

\(0,1\)

\(0\)

\(1\)

\(1\)

\(1\)

\(1,0\)

\(0\)

\(1\)

\(1\)

\(1\)

\(1,1\)

\(1\)

\(1\)

\(1\)

\(1\)

Normalizing the Penalty Functions#